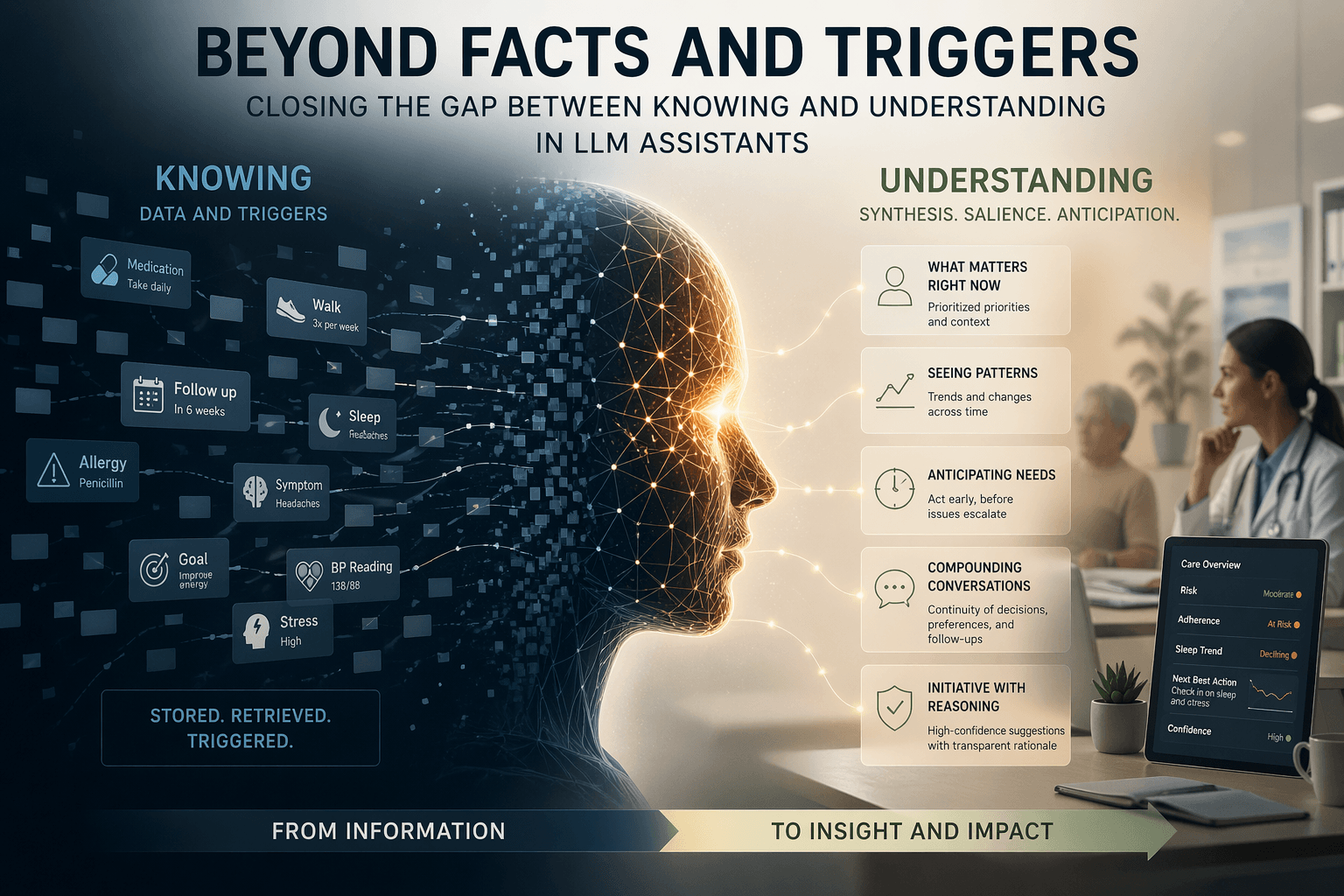

Beyond Facts and Triggers: Closing the Gap Between "Knowing" and "Understanding" in LLM Assistants

Reece Robinson, CTO, Orchestral

A patient leaves a clinic visit with a plan that looks perfect on paper: take medication daily, walk three times a week, track symptoms, follow up in six weeks.

A week later, it’s already slipping.

Not because the plan was forgotten. Not because the clinician didn’t capture the details. And not because the assistant couldn’t store the relevant information.

It slips because life happened: a few nights of bad sleep, a stressful deadline, a sick kid at home, a shift change that broke the routine. The data is still “true.” The assistant still “has” it. But the system doesn’t understand what changed, what matters right now, and what to do next.

That gap, between having data and having understanding, is where many LLM-based assistants plateau.

They become what I call data-and-trigger systems: they collect discrete user facts, retrieve semantically similar items, and fire scheduled or rule-based notifications. This architecture is useful and often the right starting point. But it systematically fails in clinical and personal-assistant settings where value depends on longitudinal understanding, contextual prioritisation, anticipation of needs, and adaptive interaction strategies over time.

Why data-and-trigger systems plateau

Data-centric systems are appealing because they’re comparatively easy to implement, easy to measure, and reasonably safe. Extract entities, store them, retrieve on similarity; schedule check-ins; react to simple conditions. You can evaluate whether the assistant remembered a preference or delivered a reminder on time.

But as soon as an assistant is expected to support real-world decision-making, health behaviour change, adherence, care planning, relationship dynamics, workload management, this design hits a ceiling. It can “know” many things without producing reliable, high-value guidance. It’s the difference between remembering what was said and understanding what matters.

Healthcare amplifies the limitation. Clinicians definitely need reliable facts; but they also need interpretable synthesis across time, risk, context, and goals. Patients similarly benefit from insight and anticipation, not just reminders or summaries.

High-quality data is necessary (but not sufficient)

The critique of data-and-trigger approaches isn’t that data is unimportant. In reality, everything more advanced depends on good data as the substrate.

If the underlying facts are incomplete, stale, inconsistent, poorly attributed, or untrustworthy, then higher-order behaviour becomes fragile. Patterns become spurious, anticipation becomes annoying or unsafe, and the system starts drifting toward alert fatigue on one side, or timid non-intervention on the other. Narrative continuity also breaks: preferences get merged incorrectly, decisions are misremembered, and learning loops “learn” from outcomes that were driven by bad inputs.

In practice, “good data” means more than extracting statements. It requires provenance (who/what said it and when), validity horizons (“still true?”), confidence, standardisation (especially in health data), explicit handling of contradictions, and governance for audit-ability, consent, and update/forget policies.

High-quality data is the foundation. The problem is that the foundation alone does not produce understanding.

The missing capabilities that turn data into understanding

A data-and-trigger assistant tends to fail in a predictable set of ways.

1. It learns facts but not patterns.

It captures statements like “prefers oat milk” or “recovering from surgery,” yet fails to synthesise cross-source correlations that actually drive outcomes. Primary care is dominated by patterns: sleep and glucose variability, symptom clusters, stress-linked hypertension, adherence decay under schedule strain. Clinicians already have facts in the record; what they lack is time-efficient, defensible synthesis that connects the dots.

2. “Proactive” behaviour is usually scheduled, not anticipatory.

A reminder at 7:30am is automation. Anticipation is noticing the trajectory shift before failure: sleep collapsing before a demanding day, early adherence decline before it becomes non-adherence, scheduling conflicts that predict missed appointments. An assistant that can’t connect calendar, goals, constraints, and recent patterns will confuse “activity” with “helpfulness.”

3. The system lacks a real concept of “what matters right now.”

Most retrieval stacks treat relevance as semantic similarity plus recency. But lives, and clinical care, have seasons: acute episodes, recovery periods, high-stress weeks, new diagnoses. In healthcare, salience is the core of prioritisation: what’s on today’s agenda, which risks dominate, what can wait. Without a salience model, assistants surface plausible but irrelevant context while missing the thread that explains the current moment.

4. Conversations don’t compound.

Many systems extract facts from isolated messages but fail to build durable narrative memory: what was decided, what changed, what barriers persist, what’s unresolved, and what the response to prior plans has been. Longitudinal care depends on continuity. Without it, interactions keep restarting and the user stops investing.

5. The assistant has no meaningful initiative.

It reacts to questions or performs mechanical check-ins, but it doesn’t consistently bring up the right thing unprompted, at the right time, with a defensible rationale. A clinician assistant, in particular, should surface care gaps, contradictions, missing data, risk signals, and next-best actions without requiring perfect prompts.

6. There’s no feedback loop for helpfulness.

Responses are treated as one-off generations rather than an evolving policy tuned by outcomes. In healthcare, preference variation is enormous: clinicians differ in assertiveness, detail level, and workflow integration. Without explicit and implicit feedback, assistants become noisy or timid, either ignored or unused.

7. The user/patient model is usually a flat bag of data.

Flat triples don’t represent hierarchy or causality well. Identity, values, goals, active priorities, constraints, and routines all end up stored at the same level. But clinical reasoning depends on structured context: goals of care, social determinants, caregiver supports, constraints, and values. Without hierarchy, the assistant struggles to adapt plans to reality, or to connect day-to-day changes to why they matter.

What this implies for clinician assistants

These gaps map directly onto clinical practice.

Facts are abundant in the EMR; insight is scarce. Triggers already exist as alerts, and they already cause fatigue. What clinicians need is selective anticipation that is justified, prioritisation that mirrors clinical reasoning, and continuity that preserves the patient story over time. Initiative should look like decision support, not generic advice. And feedback loops should translate into preference learning tied to measurable improvements.

A clinician assistant that retrieves data faster is incremental. A clinician assistant that synthesises, prioritises, anticipates, and adapts, while staying governed and interpretable, is a fundamentally different class of tool.

Real-world partnership with clinicians

This is where the architecture stops being an abstract “capability roadmap” and becomes real: the value of a layered clinical assistant is not to replace clinical judgement, but to augment the strengths of human clinicians.

Humans are uniquely good at the parts of care that are still largely invisible to machines: building rapport, reading tone, recognising subtle discomfort, and interpreting those intangible “human” signals that often change the meaning of an otherwise straightforward story. A well-designed assistant should protect and amplify that capacity by reducing cognitive load and offloading the work humans are comparatively less good at: systematically tracking longitudinal complexity, monitoring multi-dimensional trajectories, and spotting faint trends that only become obvious once a patient has already deteriorated.

That matters because so much of modern healthcare, particularly in Western systems, is structurally reactive. We often recognise patterns only when the signal is loud enough to force a change. A layered assistant has the potential to shift a slice of that work upstream by noticing early drift in the data (and the context around the data) before reactive changes are required.

Done well, this becomes a genuine partnership. The assistant handles the monitoring, synthesis, and “pattern vigilance” at scale, and it learns, over time, how individual clinicians reason, prioritise, and communicate. Clinicians stay focused on the human interaction and the nuanced judgement calls. The system, in turn, becomes better at delivering the right support at the right moment, in a form that fits how that clinician actually practises.

A pragmatic capability roadmap

The path forward doesn’t require an “AGI leap.” It requires layering capabilities that turn data into understanding.

Start with a fact-quality pipeline: provenance, standardisation, validity and confidence, contradiction handling, and governance. Advanced behaviour should degrade gracefully when confidence is low.

Then build a dynamic salience core: a compact, frequently updated representation of what is active and important right now, used to weight retrieval and shape interaction.

Next, add cross-source pattern analysis so the assistant can detect change and correlation across time, feeding that into salience rather than burying it in a summary.

From there, ensure conversations compound by persisting evolving summaries of decisions, concerns, preferences, and follow-ups.

Only then does proactivity become valuable: a look-ahead engine that scans upcoming schedule, recent patterns, and active priorities to produce a very small number of high-confidence suggestions, with reasons.

Finally, close the loop with explicit and implicit feedback learning, and evolve from flat records to structured user/patient models that represent goals, constraints, priorities, and risks in an interpretable way.

Conclusion

Data-and-trigger approaches provide solid infrastructure, but they cap out at “stored information + scheduled notifications.” The missing intelligence is not more data. It is validated data plus synthesis, salience, anticipation, compounding narrative continuity, meaningful initiative, and learning from feedback.

In clinical settings, the payoff is partnership: the system takes on systematic monitoring and longitudinal pattern vigilance, so clinicians can focus on judgement, context, and the human signals that still matter most.

That’s how assistants stop merely knowing and start reliably understanding, in the way that matters in longitudinal care and real life.